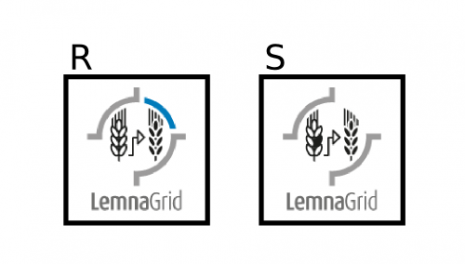

This week I wanted to repeat the VIS top image segmentation few days ago. It also suddenly dawned on me. When facing the image segmentation task, we usually think of enumerating a set of unique image properties and design filters accordingly. For example, a plant exhibit the basic colours green, yellow and brown and we can in turn define a foreground colour palette accordingly. In contrast, if you have a reference image then finding your object is similar to solving a spot-the-difference puzzle:

Can you find the three objects in S that were not present in R? The solution is at the bottom of this page***

So, given a reference image we can spot an object in a sample image by image comparison, aka image subtraction. In this installment I show you how to apply image subtraction with LemnaGrid for image segmentation.

Model

Given two images x and y, the basic formula is

- img_subtr(x, y) = x + inv(y)

- where inv(y) is the inversion of y defined as

- inv(y) = 255 – y.

For example, an image pixel can have 256 different values ranging from 0 to 255, where 0 = black, 255 = white, thus

y inv(y)

0 255

255 0

64 191

192 63

Further, if img_subtr yields a value above 255, e.g. 192 + inv(64) = 383, then it will be set to 255:

- case 1: img_subtr = 0 then 0

- case 2: img_subtr = 255 then 255

- case 4: img_subtr > 255 then 255

- case 5: img_subtr < 0 then 0

Here is how we apply the model in practice with LemnaGrid. We can find a dark object on bright background by setting x = sample image (s), and y = reference image (r):

In contrast, to detect a bright object on dark background we change the assignment of parameters, i.e. x = r, and y = s:

Ultimately, our final model is the logical combination of both approaches:

- a) dark object detection on bright background

- b) bright object detection on dark background

Formally we note - IMG_SUBTR(s, r) = s + inv(r) OR r + inv(s)

Here is the complete model implemented in LemnaGrid:

Application

The input image is the same as few days ago (a). This is what I got from last time VIS top image analysis (b). In comparison this is the output I get from image comparison (c).

Here is a quick summary on the output from image subtraction:

- sample

- shadows of sample

- illumination differences between sample and reference image

- differences in background setting, e.g. differences in pot position

- differences in colour on reflective materials, e.g. metallic surfaces

- Altogether, image subtraction returns less artefact than the image mask approach

Conclusion

Image subtraction is a widely applied image segmentation technique. Key to this method is to find differences between a sample image and a reference image.

While the idea is elegant (see Model section), there are some considerations to be taken (see Application section).

Supplemental

I have omitted Image negate in this installment, but the result is similar. Can you spot the difference:

- x + neg(y)

Drop me a quick email if you figured it out!

***Solution to spot-the-difference puzzle!

i. a black kernel

ii. a white stripe masking the left ear

iii. a white arch masking the blue arch